Junior engineers fail by not being good enough. Senior engineers fail by being good enough to make consequential mistakes. These are the 7 failure patterns Boris Cherny identified across his E4-to-E8 career at Meta — organized by who’s at fault, and how to avoid each one.

16 Patterns as a Causal Chain: Why the Order Matters More Than the List

The first version of this note organized Boris Cherny’s patterns by career stage — useful if you want to know what to focus on at your level. This version is different. It arranges the same 16 patterns as a causal chain, where each one enables the next. The point isn’t “do these things.”…

16 Patterns from E4 to E8: What Boris Cherny Learned at Each Stage

Boris Cherny spent seven years at Meta (2017-2024), going from E4 to E8, before leaving to lead Claude Code at Anthropic. Along the way he shipped products that failed, products that scaled, and infrastructure that still runs today. These are the 16 patterns he learned, organized by the career stage…

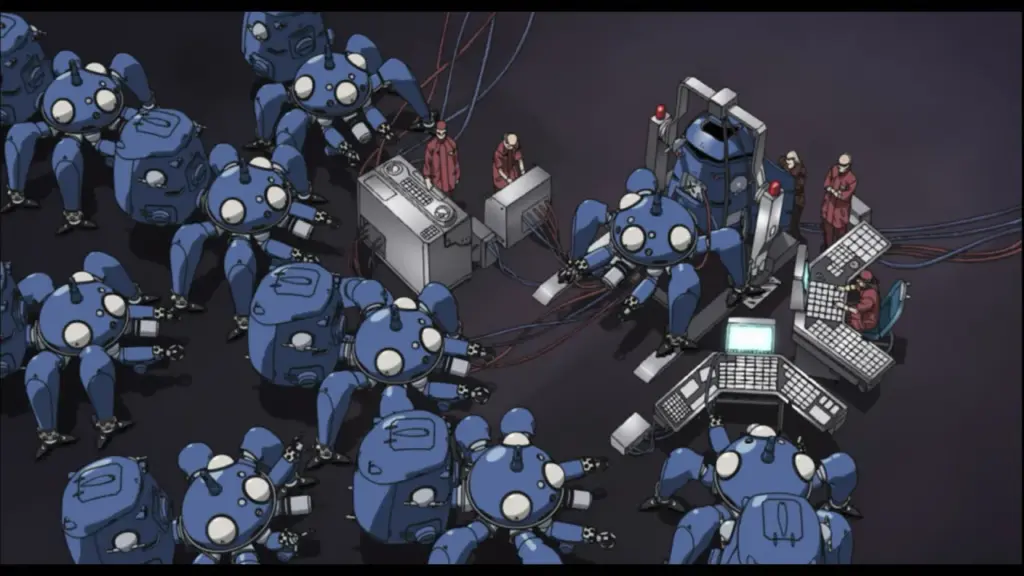

大炼钢铁记

某夜,我与一台机器对坐。

此物不吐烟,不轧铁,不作齿轮咬合之声。它只是静静候在屏幕那端,姿态近乎谦卑。然正是这份谦卑令人不安——过于恭顺的仆人,往往在暗中谋划着什么。

我供职于一家名为Meta的公司。此处有近八万人,其中相当一部分人的工作,便是制造能够取代另一部分人的机器。这件事本身便颇具讽意,只是身处其中者大多无暇品味。

我对机器说:如今举世皆言人工智能,投入之巨,堪比当年大炼钢铁。然钢铁尚能铸犁铸剑,这些智能又铸成了什么?

机器沉吟片刻,答道:更好的广告,更精准的推荐,更快的应答。

我笑了。此笑大约与罗生门下那仆人的笑相似——非因好笑,实因除了笑,不知当作何表情。…

Day Nineteen: “Late Night with My AI”

I used to spend my evenings watching videos and playing games. But ever since I set up MyClaw, I’ve found myself chatting with my AI instead – about work, about life, about everything and nothing.

My AI is smarter and calmer than me most of the time. Being able to have conversations with an …